准备开始

一台兼容的 Linux 主机。Kubernetes 项目为基于 Debian 和 Red Hat 的 Linux 发行版以及一些不提供包管理器的发行版提供通用的指令

每台机器 2 GB 或更多的 RAM (如果少于这个数字将会影响你应用的运行内存)

2 CPU 核或更多

集群中的所有机器的网络彼此均能相互连接(公网和内网都可以)

节点之中不可以有重复的主机名、MAC 地址或 product_uuid。请参见这里了解更多详细信息。

开启机器上的某些端口。请参见这里 了解更多详细信息。

禁用交换分区。为了保证 kubelet 正常工作,你 必须 禁用交换分区。

| 角色 | IP |

|---|

| master | 192.168.235.190 |

| node1 | 192.168.235.191 |

| node2 | 192.168.235.192 |

在所有主机上执行:

关闭防火墙:

# systemctl disable --now firewalld

关闭selinux:

# sed -i 's/enforcing/disabled/' /etc/selinux/config

关闭swap:

# vim /etc/fstab

注释或删除swap的行

#/dev/mapper/cs-swap none swap defaults 0 0

#sudo sed -i 's/.*swap.*/#&/' /etc/fstab

设置主机名: 个人习惯 <hostname>:主master 节点:node1 node2 node3 不设置后面会出问题执行kubectl get nodes命令只显示一条,服务器名称不能一样

# hostnamectl set-hostname <hostname>

配置时间同步:

# yum -y install chrony

在master主机上执行:

将桥接的IPv4流量传递到iptables的链:

[root@master ~]# cat > /etc/sysctl.d/k8s.conf << EOF

> net.bridge.bridge-nf-call-ip6tables = 1

> net.bridge.bridge-nf-call-iptables = 1

> EOF

[root@master ~]# sysctl --system # 生效

永久解决方法:

在/etc/sysctl.conf中添加:

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

执行sysctl -p 时刷新

在master添加hosts:

[root@master ~]# cat >> /etc/hosts << EOF

> 192.168.10.201 master master.example.com

> 192.168.10.202 node1 node1.example.com

> 192.168.10.203 node2 node2.example.com

> EOF

免密认证:

[root@master ~]# ssh-keygen -t rsa

[root@master ~]# ssh-copy-id master

[root@master ~]# ssh-copy-id node1

[root@master ~]# ssh-copy-id node2

安装Docker/kubeadm/kubelet

Kubernetes默认CRI(容器运行时)为Docker,因此先安装Docker。

所有主机安装docker

# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

# yum -y install docker-ce

# systemctl enable --now docker

# docker --version

# cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://lngv2rof.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2"

}

EOF

所有主机添加kubernetes阿里云YUM软件源

地址:kubernetes镜像-kubernetes下载地址-kubernetes安装教程-阿里巴巴开源镜像站 (aliyun.com)

# cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

所有主机安装kubeadm,kubelet和kubectl

由于版本更新频繁,这里指定版本号部署:

# yum install -y kubelet-1.20.0 kubeadm-1.20.0 kubectl-1.20.0

!!安装完成以后不要启动,设置开机自启动即可

# systemctl enable kubelet

部署Kubernetes Master

在master主机上执行

[[root@master ~]# kubeadm init \

--apiserver-advertise-address=192.168.235.190 \ ##此处是kubernetes master主机IP

--image-repository registry.aliyuncs.com/google_containers \ ##指定阿里云镜像仓库地址。

--kubernetes-version v1.20.0 \ ##刚刚安装的kubernetes的版本

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.20.0

[preflight] Running pre-flight checks

[WARNING FileExisting-tc]: tc not found in system path

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.12. Latest validated version: 19.03

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local master.example.com] and IPs [10.96.0.1 192.168.10.201]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost master.example.com] and IPs [192.168.10.201 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost master.example.com] and IPs [192.168.10.201 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 12.502159 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.20" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master.example.com as control-plane by adding the labels "node-role.kubernetes.io/master=''" and "node-role.kubernetes.io/control-plane='' (deprecated)"

[mark-control-plane] Marking the node master.example.com as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: 0lsupo.vxs0s7w6l78okdod

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.235.190:6443 --token leukyd.7clxo0nzo8xqlmrh \

--discovery-token-ca-cert-hash sha256:33b43ab5c2a92b7513c5a1a15f9b46fb21ff924c0a14957ced6a3a4d0969b665

##保存好此处的命令

[root@master ~]# vim init

临时存储好上面这段,后面要在node服务器上执行

[root@master ~]# vim init

#保存以下内容

kubeadm join 192.168.235.190:6443 --token leukyd.7clxo0nzo8xqlmrh \

--discovery-token-ca-cert-hash sha256:33b43ab5c2a92b7513c5a1a15f9b46fb21ff924c0a14957ced6a3a4d0969b665

设置环境变量使用kubectl工具

[root@master ~]# echo 'export KUBECONFIG=/etc/kubernetes/admin.conf' > /etc/profile.d/k8s.sh

[root@master ~]# source /etc/profile.d/k8s.sh

非root用户使用kubectl工具还需要做下面的事

# mkdir -p $HOME/.kube

# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

# sudo chown $(id -u):$(id -g) $HOME/.kube/config

# kubectl get nodes

查看当前镜像和容器

[root@master ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.aliyuncs.com/google_containers/kube-proxy v1.20.0 10cc881966cf 12 months ago 118MB

registry.aliyuncs.com/google_containers/kube-apiserver v1.20.0 ca9843d3b545 12 months ago 122MB

registry.aliyuncs.com/google_containers/kube-controller-manager v1.20.0 b9fa1895dcaa 12 months ago 116MB

registry.aliyuncs.com/google_containers/kube-scheduler v1.20.0 3138b6e3d471 12 months ago 46.4MB

registry.aliyuncs.com/google_containers/etcd 3.4.13-0 0369cf4303ff 15 months ago 253MB

registry.aliyuncs.com/google_containers/coredns 1.7.0 bfe3a36ebd25 18 months ago 45.2MB

registry.aliyuncs.com/google_containers/pause 3.2 80d28bedfe5d 22 months ago 683kB

[root@master ~]#

[root@master ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

3211d07aea83 10cc881966cf "/usr/local/bin/kube…" 15 minutes ago Up 15 minutes k8s_kube-proxy_kube-proxy-59qr6_kube-system_c3cfcd07-6dd3-47ca-882b-246860ac6452_0

42a2b6d506df registry.aliyuncs.com/google_containers/pause:3.2 "/pause" 15 minutes ago Up 15 minutes k8s_POD_kube-proxy-59qr6_kube-system_c3cfcd07-6dd3-47ca-882b-246860ac6452_0

188cd1666a9d 3138b6e3d471 "kube-scheduler --au…" 15 minutes ago Up 15 minutes k8s_kube-scheduler_kube-scheduler-master.example.com_kube-system_0378cf280f805e38b5448a1eceeedfc4_0

0ea1a7607bbd ca9843d3b545 "kube-apiserver --ad…" 15 minutes ago Up 15 minutes k8s_kube-apiserver_kube-apiserver-master.example.com_kube-system_26147bc137c63c92fa90fcbaf5bffa1b_0

9b99bda3213c 0369cf4303ff "etcd --advertise-cl…" 15 minutes ago Up 15 minutes k8s_etcd_etcd-master.example.com_kube-system_55560107e174c866995e72ad649df97e_0

cd369525a53f b9fa1895dcaa "kube-controller-man…" 15 minutes ago Up 15 minutes k8s_kube-controller-manager_kube-controller-manager-master.example.com_kube-system_5c575d17517839b576ab4817fd06353f_0

880f36df090a registry.aliyuncs.com/google_containers/pause:3.2 "/pause" 15 minutes ago Up 15 minutes k8s_POD_kube-scheduler-master.example.com_kube-system_0378cf280f805e38b5448a1eceeedfc4_0

b5b782317b59 registry.aliyuncs.com/google_containers/pause:3.2 "/pause" 15 minutes ago Up 15 minutes k8s_POD_kube-controller-manager-master.example.com_kube-system_5c575d17517839b576ab4817fd06353f_0

51164916d695 registry.aliyuncs.com/google_containers/pause:3.2 "/pause" 15 minutes ago Up 15 minutes k8s_POD_kube-apiserver-master.example.com_kube-system_26147bc137c63c92fa90fcbaf5bffa1b_0

aa0215a17700 registry.aliyuncs.com/google_containers/pause:3.2 "/pause" 15 minutes ago Up 15 minutes k8s_POD_etcd-master.example.com_kube-system_55560107e174c866995e72ad649df97e_0

[root@master ~]#

查看集群节点

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady control-plane,master 16m v1.20.0

# "NotReady"表示还没就绪,后台还有任务在进行

master主机安装Pod网络插件(CNI)

[root@master ~]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

无法访问请尝试以下操作

具体原因就是raw.githubusercontent.com 这个域名解析被污染了,无法访问

https://www.ipaddress.com/ 进入这个网站,查询raw.githubusercontent.com的ip地址为199.232.96.133,在/etc/hosts中增加一行

199.232.96.133 raw.githubusercontent.com

保存,再使用wget http://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml 就能获取到flannel.yml了

如果无法连接,可以将这个链接点开复制里面的内容到一个文件内,然后指定文件

[root@master ~]# vi flannel.yaml

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

。。。。。。。略

[root@master ~]# kubectl apply -f flannel.yaml

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

[root@master ~]#

6.加入Kubernetes Node

在node节点上执行刚刚保存的命令

[root@node1 ~]# kubeadm join 192.168.10.201:6443 --token 0lsupo.vxs0s7w6l78okdod \

--discovery-token-ca-cert-hash sha256:eac059c0df6a4e49e749580cf88b4412c52a68c264aa77cca36ef4dc86eb8dfa

[preflight] Running pre-flight checks

[WARNING FileExisting-tc]: tc not found in system path

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.12. Latest validated version: 19.03

[WARNING Hostname]: hostname "node1.example.com" could not be reached

[WARNING Hostname]: hostname "node1.example.com": lookup node1.example.com on 114.114.114.114:53: no such host

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

#和node1一样

[root@node2 ~]# kubeadm join 192.168.10.201:6443 --token 0lsupo.vxs0s7w6l78okdod \

--discovery-token-ca-cert-hash sha256:eac059c0df6a4e49e749580cf88b4412c52a68c264aa77cca36ef4dc86eb8dfa

一、如果出现以下报错信息

[preflight] WARNING: JoinControlPane.controlPlane settings will be ignored when control-plane flag is not set.

[preflight] Running pre-flight checks

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR FileAvailable--etc-kubernetes-kubelet.conf]: /etc/kubernetes/kubelet.conf already exists

[ERROR FileAvailable--etc-kubernetes-bootstrap-kubelet.conf]: /etc/kubernetes/bootstrap-kubelet.conf already exists

[ERROR FileAvailable--etc-kubernetes-pki-ca.crt]: /etc/kubernetes/pki/ca.crt already exists

#重置kubeadm

kubeadm reset

#删除k8s配置文件和证书文件

rm -rf /etc/kubernetes/kubelet.conf /etc/kubernetes/pki/ca.crt #删除k8s配置文件和证书文件

然后重启再次执行:

kubeadm join 192.168.10.201:6443 --token 0lsupo.vxs0s7w6l78okdod \

--discovery-token-ca-cert-hash sha256:eac059c0df6a4e49e749580cf88b4412c52a68c264aa77cca36ef4dc86eb8dfa

在master主机查看

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready control-plane,master 10m v1.20.0

node1 Ready <none> 12m v1.20.0

node2 Ready <none> 12m v1.20.0

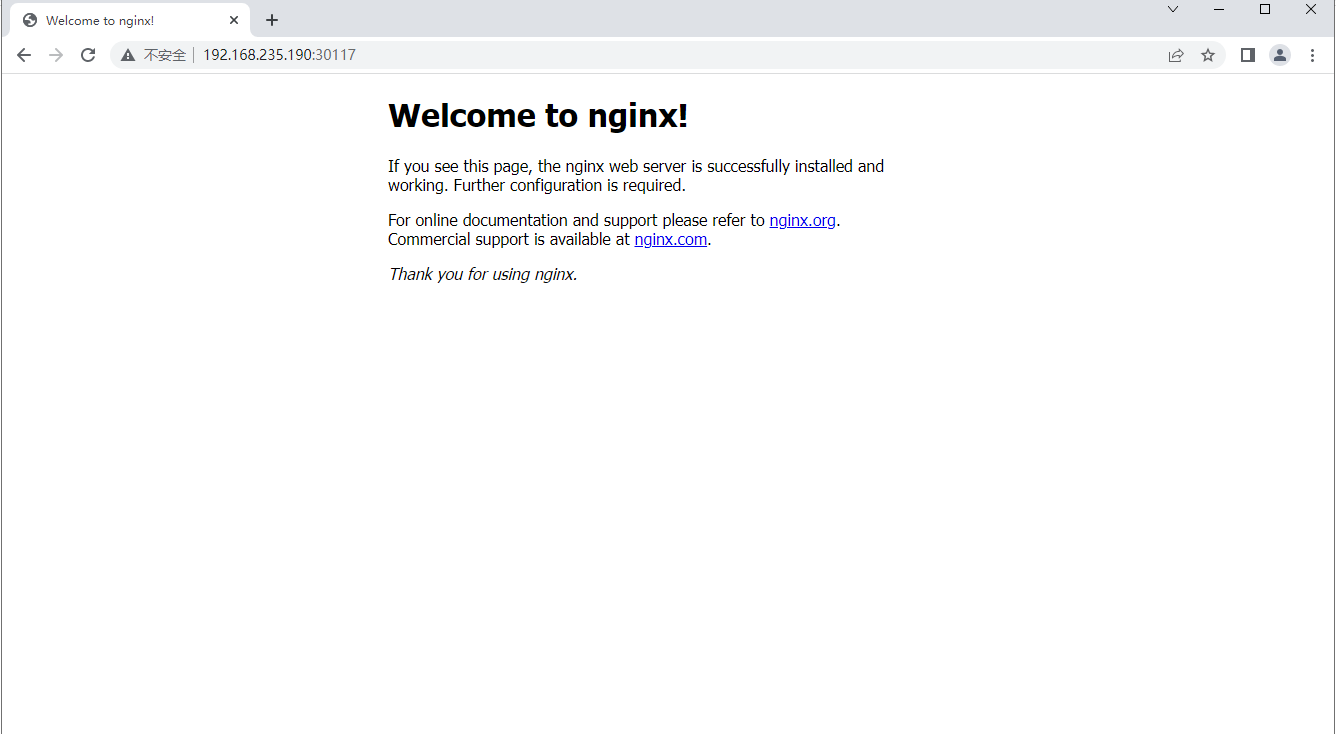

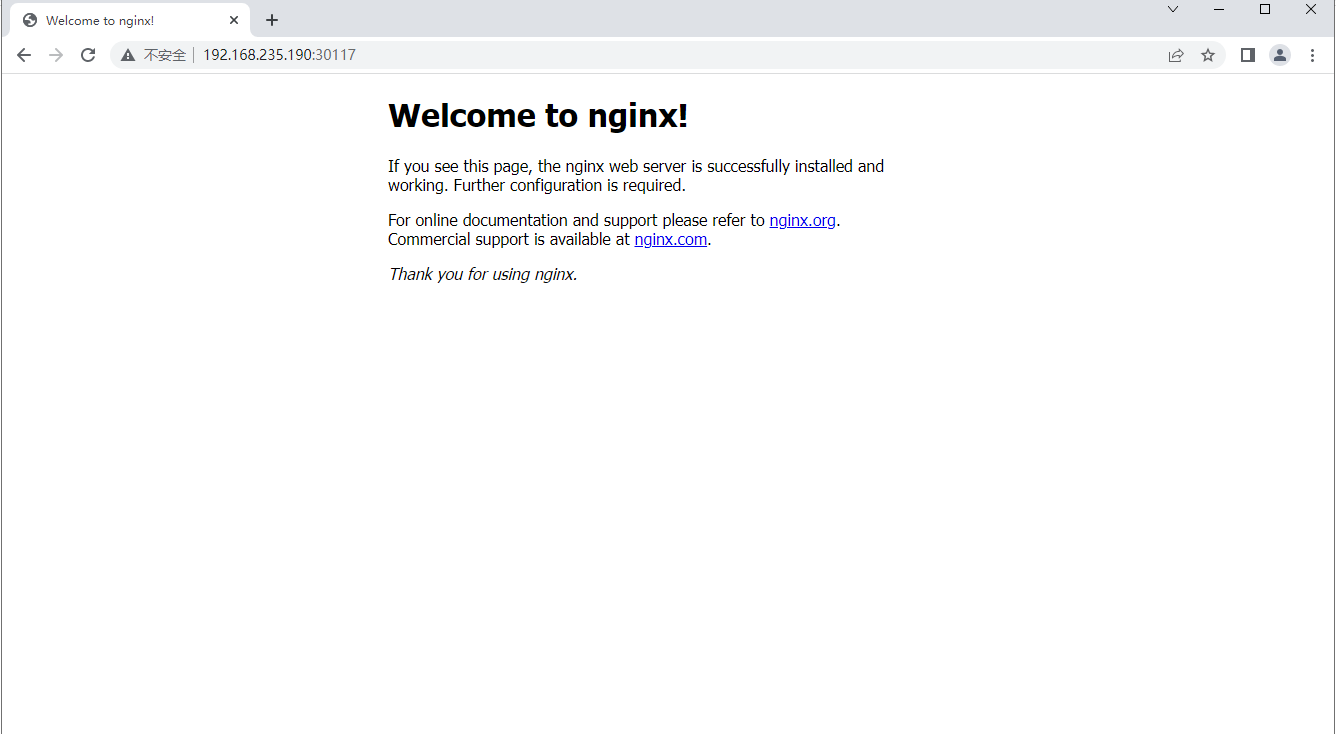

7.测试kubernetes集群

# 创建一个pod,是deployment类型的nginx,使用nginx镜像,没有指定在哪个节点运行

[root@master ~]# kubectl create deployment nginx --image=nginx

deployment.apps/nginx created

# 暴露pod是deployment类型的nginx端口80,暴露在节点上

[root@master ~]# kubectl expose deployment nginx --port=80 --type=NodePort

service/nginx exposed

# 查看

[root@master ~]# kubectl get svc,pod

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 51m

service/nginx NodePort 10.103.221.120 <none> 80:30724/TCP 15s

NAME READY STATUS RESTARTS AGE

pod/nginx-6799fc88d8-kdx4m 0/1 ContainerCreating 0 35s

# 查看在哪个节点上运行

[root@master ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-6799fc88d8-kdx4m 1/1 Running 0 73s 10.244.2.2 node2.example.com <none> <none>

#查看master端端口

[root@master ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

LISTEN 0 128 192.168.235.190:2379 0.0.0.0:*

LISTEN 0 128 127.0.0.1:2379 0.0.0.0:*

LISTEN 0 128 192.168.235.190:2380 0.0.0.0:*

LISTEN 0 128 127.0.0.1:2381 0.0.0.0:*

LISTEN 0 128 127.0.0.1:10257 0.0.0.0:*

LISTEN 0 128 127.0.0.1:10259 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 128 0.0.0.0:30117 0.0.0.0:*

LISTEN 0 128 127.0.0.1:34503 0.0.0.0:*

LISTEN 0 128 127.0.0.1:10248 0.0.0.0:*

LISTEN 0 128 127.0.0.1:10249 0.0.0.0:*

LISTEN 0 128 *:10250 *:*

LISTEN 0 128 *:6443 *:*

LISTEN 0 128 *:10256 *:*

LISTEN 0 128 [::]:22 [::]:*

[root@master ~]#

访问网站查看页面 master端IP:暴露端口